Large database transaction log backups with Fabasoft COLD Loader

Last update: 01 February 2023 (cf)

Summary

Using Fabasoft COLD Loader can lead to large transaction log / redo log backups in the database. The reason might not be the imported data, but the Log object of the import. The Log object also may lead to slow imports.

Solution

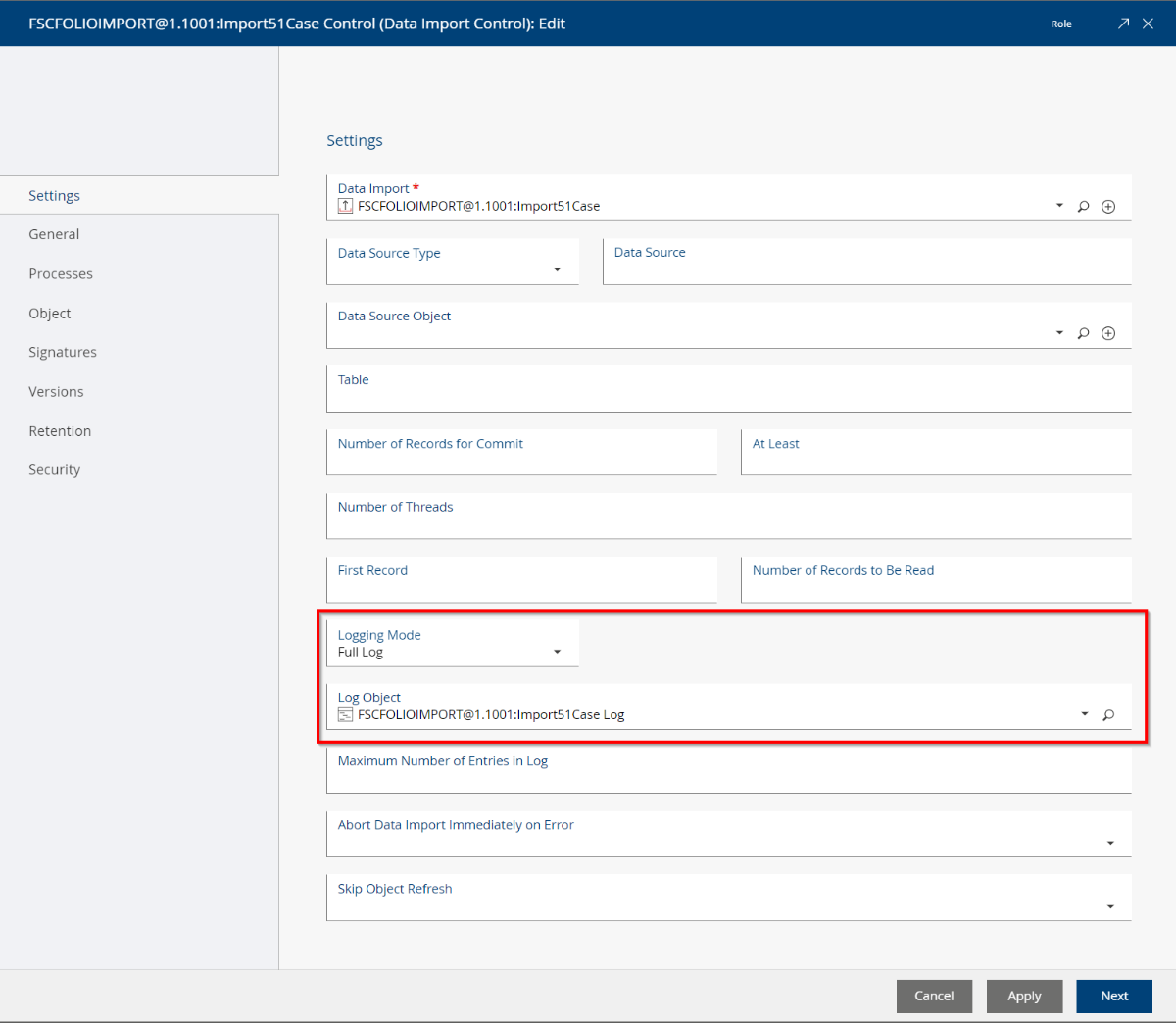

Check the following object classes for the configured logging options:

- Data Import Control objects (class FSCCOLD@1.1001:DataImportControl)

- Data Import objects (class FSCCOLD@1.1001:DataImport)

- Data Import (Component Object) objects (class FSCCOLD@1.1001:DataImportComponentObject)

Check the Logging Mode set at these objects. If currently set to Full Log, change this to No Log or Log Errors.

Furthermore, check the size of the current Log Object and preferably you unlink the current Log object (the next run will create a new object).

Details

The Log object stores every run in an aggregate list entry. Every list entry contains the imported objects amount and, furthermore the textual logfile. The list entry of a running import (e.g. “x Objects refreshed”) is updated and commited on the fly during the import, therefore every created/updated/failed entry reinduces a database commit. As the internal management of the aggregate requires a full database write of the aggregate, the required update statements to manage the log are considerable.

This exponentially increase of database updates for the log object increases the transaction log backup size of the database. Also, these updates require more and more time, that slows down the import.

Therefore, if no import log is required, best option is to disable logging by setting the No Log option, or – if required - the Log Errors option.